Pawsey Supercomputing Centre will become home to one of the largest research-focused object storage systems in the world, with 130 petabytes of online and offline storage as part of the facility’s $70 million capital refresh project.

Pawsey has awarded two contracts for the storage, with a combined value of $7 million, to Dell and Xenon, for multi-tier storage that will improve data availability, data transfer speed, and overall storage capacity.

The new storage capability represents the second-biggest acquisition in Pawsey’s capital refresh behind the Setonix supercomputing system itself.

It includes the addition of object storage technologies to the Pawsey mix, making it easier for research groups to share and access data in flexible ways.

Mark Gray, Head of Scientific Platforms at Pawsey, said the decision to invest in the mix of object-based and offline storage was driven by shifts in the way people use and move data.

“When researchers want to make massive amounts of data available to users on the internet, any delay in accessing data is very hard to accommodate,” he says.

“Users and most web tools expect files to be immediately available and the time it takes for a tape system to retrieve data becomes a challenge.”

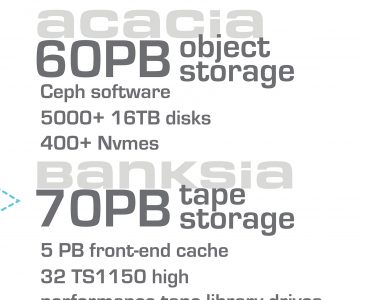

The 130 PB data storage system, currently being installed, includes:

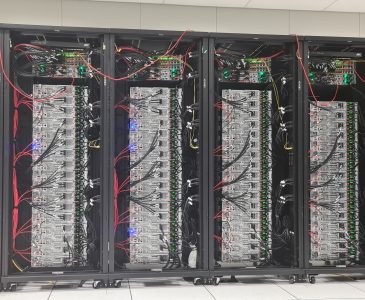

- A disk-based system powered by Dell, named Acacia after Australia’s national floral emblem the Golden Wattle – Acacia pycnantha, providing 60PB of high-speed object storage for hosting research data online. This multi-tiered cluster separates different types of data to improve data availability.

- Offline storage, named Banksia, after another Australian wildflower with a genus of around 170 species in the plant family Proteaceae, provided by Xenon, incorporating Pawsey’s current object storage infrastructure, including two mirrored libraries each holding 70PB of data, duplicated for data security.

The system has been designed to be both cost-effective and scalable.

To maximise value, Pawsey has invested in Ceph, software for building storage systems out of generic hardware, and has built the online storage infrastructure around Ceph in-house. As more servers are added, the online object storage becomes more stable, resilient, and even faster.

“That’s how we were able to build a 60 PB system on this budget,” explains Gray.

“An important part of this long-term storage upgrade was to demonstrate how it can be done in a financially scalable way. In a world of mega-science projects like the Square Kilometre Array, we need to develop more cost-effective ways of providing massive storage.”

The latest evolution in Pawsey’s data storage infrastructure should be ready for user migration before the end of 2021.

“Both Xenon and Dell have worked with us to build a storage system that is not only fit for purpose now, but is flexible and will scale easily in capacity and performance to grow with our needs into the future,” Gray says.

The backplane of Acacia, Pawsey's new object storage system for research, exposes the cabling and high speed networking essential to building this new, state of the art infrastructure

Specificacions for the new storage capability at Pawsey